August is the ideal month to run focused A/B experiments that will sharpen your Q4 messaging. With slower holiday campaigns and fewer competing launches, you can collect clean engagement data and iterate quickly. This post lays out five practical experiments you can run across your professional network posts in August, including headlines, hooks, calls to action, image versus no image, and posting times. Each experiment includes a sample hypothesis, recommended sample size and measurement window, and metrics to track so you can move into September and October with confidence.

These tests are designed for content creators, community managers, and marketing teams who want to improve performance using the best LinkedIn engagement strategies while preparing for peak season. The tactics are tactical and repeatable, and they are built to integrate with a content calendar that scales. Read on for step by step setups, analysis tips, and quick templates you can copy into your experiment tracker.

Why run A/B tests in August

August provides a balance of attention and calm. Many companies are between campaign cycles, and professionals have more breathing room to engage with thoughtful posts. That window makes it easier to gather reliable signals without the noise of large-scale ad pushes and seasonal promotions. Running experiments now helps you optimize messaging, creatives, and timing well before Q4 amplification begins. Learn more in our post on Thought Leadership Series: Building a Q3 narrative arc that peaks in September.

When preparing your experiments, align each test to a business question. Are you trying to increase reactions and shares, drive profile visits, or generate newsletter signups? The best LinkedIn engagement strategies start with clarifying the primary conversion you care about. That focus guides hypothesis design, sample segmentation, and metric selection so results are actionable.

Finally, August experiments can be short and iterative. Use 10 to 21 day windows for most tests, and ramp what works into a content calendar. Short cycles let you learn, refine, and validate before the heavier Q4 period. Below are five experiments that cover creative, copy, and timing variables that influence engagement.

Experiment 1: Headline variations

Why test headlines? The first line or headline determines whether someone reads the rest of your post. Small changes in phrasing, specificity, or tone can produce large shifts in read-through and engagement. For this test, isolate headline wording while keeping the body content and image constant. That isolates the headline variable so you can confidently attribute differences to phrasing.

Sample hypotheses and setups:

Hypothesis A: "Specificity wins" — Posts with numbers and explicit outcomes will get higher comment rates than open-ended questions.

Hypothesis B: "Authority tone vs conversational tone" — Direct authority headlines will yield more reshares, while conversational headlines will create more comments.

Test design and measurement window:

Create three headline variants for the same post body: one numeric/specific, one curiosity-driven question, and one conversational statement.

Publish each variant on comparable weekdays and times across the sample window to control for timing. If you have a limited audience, stagger by a week and use the same audience segments.

Recommended measurement window: 14 days per variant. If traffic is high, you can compress to 10 days; if your audience is smaller, extend to 21 days.

Metrics to track:

Impressions and view rate

Click through to profile or article

Reactions, comments, and reshares

Average engagement rate per impression

Interpreting results: Prioritize movement in engagement rate per impression and meaningful actions like comments or profile clicks. If a specific headline increases impressions but not meaningful engagement, explore whether it is attracting the right audience or just curiosity clicks.

Experiment 2: Hooks that open the post

Hooks are the opening lines that follow the headline and determine read-through rate. The best LinkedIn engagement strategies treat the hook as the bridge from headline to body. Hooks can use storytelling, data, problem statements, or benefits. This experiment tests which hook type drives higher read-through, shares, and conversation. Learn more in our post on August Growth Sprint: Weekly content sprints using our post ideas generator.

Sample hypotheses and setups:

Hypothesis A: A hook that starts with a short, relatable anecdote will generate higher comments than a data-first hook.

Hypothesis B: A problem/solution hook will drive more saves and shares than a benefit-first hook.

Test design and measurement window:

Select a single post idea and write four variations that change only the first two sentences. Examples: anecdote, data point, direct question, and problem statement.

Hold headline and CTA steady to isolate the hook variable.

Recommended measurement window: 10 to 14 days per variation. If you can post multiple variants across different times in a single week with non overlapping audiences, do so to save time.

Metrics to track:

Read-through rate measured by time on post or content clicks if available

Comments and comment sentiment

Saves and reshares

Replies to direct asks included in the hook

Actionable tip: If anecdotal hooks win, build a template for converting case studies into two-sentence anecdotes that fit within the first 30 to 60 characters of a post to maximize visibility in feed previews.

Experiment 3: Calls to action that convert

Calls to action guide your audience to take the next step. The CTA can be subtle or direct. Testing CTAs helps you determine which phrasing and placement produce the best conversion rates for the action you care about, whether that is signing up for a newsletter, downloading a guide, or joining a discussion. Learn more in our post on Micro-video briefs for August: 60–90 second scripts that drive demo requests.

Sample hypotheses and setups:

Hypothesis A: A soft CTA that invites comments will produce more replies than a hard CTA that asks for an opt in.

Hypothesis B: Positioning the CTA at the top of the post yields higher click rates than positioning it at the end.

Test design and measurement window:

Pick one desired action and create four variants: soft comment invite, direct sign up link, value-first CTA (highlight what they get), and top-positioned CTA. Keep the rest of the copy consistent.

Place tracking parameters or unique landing pages on direct links to measure conversion from each variant.

Recommended measurement window: 14 to 21 days to capture both immediate and delayed responses.

Metrics to track:

Click through rate on CTA links

Conversion rate on landing pages

Engagement on the post itself, including comments that indicate intent

Cost or effort per conversion if you are running paid amplification

Actionable tip: Use comment-based CTAs to generate social proof in the thread and consider pinning or following up on high quality replies to keep momentum. For direct CTAs, make sure the landing page fulfills the promise immediately to lower friction and maximize conversion.

Image vs no image: creative presence experiment

Visuals can increase attention but not all images perform equally. This experiment tests the presence of an image versus no image, and optionally the type of image such as headshot, data graphic, or abstract illustration. The goal is to find which approach increases impressions, dwell time, and conversion for your audience while aligning with your brand voice.

Sample hypotheses and setups:

Hypothesis A: Posts with a professional headshot will get higher profile clicks, while posts with a clear data graphic will get more saves and shares.

Hypothesis B: Plain text posts without images will drive deeper comments for long form content compared to posts with images.

Test design and measurement window:

Create three versions of the same post: image A (headshot), image B (data graphic or illustration), and no image. Keep copy and CTA identical.

Post each variant in the same general time window and on comparable days to control for external factors.

Recommended measurement window: 14 to 21 days per variant. If you have enough posting capacity, run them concurrently on different audience subsets.

Metrics to track:

Impressions and view rate

Engagement rate per impression

Profile clicks and link clicks for posts with CTAs

Comments and qualitative feedback about the image

Interpretation guidance: If the headshot variant increases profile clicks, it suggests your audience responds to individual trust signals. If the data graphic drives saves and shares, prioritize easy to read visuals in future posts. If the no image variant leads to richer conversation, that implies your audience values substance over visual hooks and you can lean into longer text posts for certain topics.

Experiment 5: Optimal posting times for meaningful engagement

Timing affects reach and engagement because audience availability varies across days and hours. This test is about finding posting windows that maximize meaningful interactions rather than vanity metrics. The objective is to identify up to three repeatable posting slots you can use in Q4.

Sample hypotheses and setups:

Hypothesis A: Early morning posts will get more clicks from active professionals, while late afternoon posts will generate more comments and discussion.

Hypothesis B: Midweek windows produce higher professional engagement than early or late week posts.

Test design and measurement window:

Select the same post content and publish it at different times of day across multiple days: early morning, late morning, early afternoon, late afternoon, and evening. Repeat this schedule across two weeks to gather enough samples.

If possible, post the same content across different days of week to test weekday patterns versus weekend behavior.

Recommended measurement window: 21 days to capture day of week variance and to accumulate sufficient data for each slot.

Metrics to track:

Engagement rate per impression by time slot

Number of meaningful comments and replies within 48 hours

Click through and conversion rates if the post includes links

Time to first comment, as early engagement boosts distribution

Actionable tip: Pair timing tests with small paid boosts for low sample slots to accelerate learning if you face audience constraints. Always normalize by impressions so you compare engagement rates rather than absolute numbers.

Designing experiments for statistical clarity

To get actionable results, design tests that isolate one variable at a time. Avoid changing both image and CTA in the same test. Keep the rest of the content consistent. Use consistent copy length and structure where applicable to reduce noise.

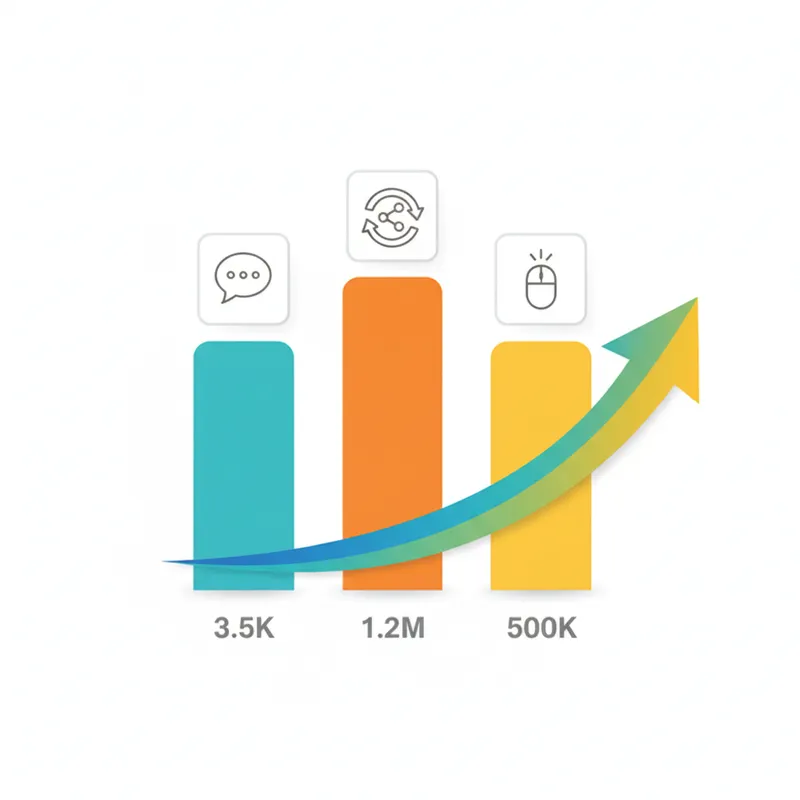

Sample sample size and segmentation guidance:

If your typical post gets 500 to 2,000 impressions, aim for at least 500 impressions per variant to collect early signals. For reliable conclusions, shoot for 1,000 impressions per variant.

Segment by audience when possible. If you manage multiple pages or profiles, run the same test simultaneously across similar audience segments to increase sample size and validate consistency.

Use unique links or tracking parameters to measure conversions per variant. For comment-based CTAs, track the proportion of comments to impressions and the quality of comments.

Analysis tips:

Calculate engagement rate per impression and per impression relative lift between variants. Look beyond percentage differences and assess whether the change aligns with your business objective. If a variant increases comments but also brings low quality or off topic replies, evaluate whether the trade off is acceptable. Run follow up micro experiments on the winner to confirm results before scaling into Q4.

Sample experiment calendar for August

Here is a practical schedule you can adopt in August to run all five experiments while keeping cadence steady. The calendar assumes a 31 day month and segments each test to prevent overlap between variants for the same audience.

Week 1: Run headline test. Publish three variants on Monday, Wednesday, and Friday. Measure for 14 days from each publish date.

Week 2: Run hook test. Publish four hook variations across Tuesday and Thursday slots. Measure for 14 days each.

Week 3: Run CTA test. Publish each CTA variant across Monday, Wednesday, and Friday. Measure for 14 to 21 days depending on conversion velocity.

Week 4: Run image vs no image and timing experiments concurrently if audience size supports it. For timing, use repeated slots across the final two weeks. For image experiment, schedule three posts on comparable days and times.

Tracking and documentation:

Create a simple spreadsheet capturing publish date, time, variant label, impressions, engagement metrics, link clicks, conversions, and qualitative notes about comments.

Record notable external events that could affect reach such as company announcements or industry news to explain anomalies.

Use the final week of August to validate top performers and prepare to scale in September and Q4.

Examples of hypotheses and quick templates

Below are copy templates and measurable hypotheses you can copy into your experiment tracker. Each template uses a single variable change so you can test it cleanly.

Headline template numeric: "5 practical steps to reduce onboarding time by 30 percent" — Hypothesis: numeric headline will increase clicks and saves by 10 percent.

Headline template question: "What if onboarding could be faster without sacrificing quality" — Hypothesis: curiosity headline will increase comments by 15 percent.

Hook anecdote: "I spent a week with a team that cut onboarding in half. Here is what worked" — Hypothesis: anecdotal hook will increase comments about personal experience.

CTA soft: "Share your experience in the comments" — Hypothesis: soft CTA will boost comment volume by 20 percent and improve comment quality.

CTA direct: "Download the one page checklist" with a short link — Hypothesis: direct CTA will increase downloads but reduce comment volume.

Image test: headshot versus data graphic versus no image — Hypothesis: headshot will increase profile clicks, data graphic will increase saves.

Timing test: early morning versus late afternoon — Hypothesis: early morning will increase clicks, late afternoon will increase comments.

Use these templates to standardize tests across teams and ensure your A/B framework remains disciplined. Consistent documentation accelerates learning and helps you build a repository of what works for different audience segments and content categories.

Interpreting results and deciding when to scale

Once you complete tests, consolidate results in a dashboard that highlights lift versus control, confidence intervals if available, and qualitative insights from comments. Focus on the metrics that align with your Q4 goals. If the objective is leads, prioritize conversion metrics. If the objective is thought leadership, prioritize comments and reshares.

Decision rules for scaling:

If a variant shows consistent lift of 10 percent or more in your primary metric across at least two runs, consider it a winner.

Validate winners with a follow up test that changes a secondary variable to check robustness.

If variants produce mixed signals, run a targeted follow up to isolate the influence of audience segments or posting context.

Remember that small improvements compound. A 10 percent sustained increase in engagement across weekly content can significantly improve reach and conversions by Q4. The best LinkedIn engagement strategies are iterative rather than one time fixes. Use August to build a playbook you can replicate and scale.

Practical tips to maintain momentum after August

After you identify winners, move promptly to lock in publishing templates for Q4. Create modular post templates that combine winning headlines, hooks, and CTAs. Use content batching to produce assets ahead of time so you can focus on community engagement when the seasonal traffic increases.

Additional operational tips:

Keep an experiment log with dates, variants, and top observations. This helps teams replicate wins.

Rotate successful templates every 3 to 4 weeks so your audience does not experience fatigue from repetitive wording.

Train moderators and community managers on responding quickly to early comments to increase distribution and build conversation depth.

By treating each experiment as a building block rather than a single data point, you can compound learnings and create a repeatable process for optimization. The best LinkedIn engagement strategies are those that tie incremental wins to measurable business outcomes and scale through consistent process.

Conclusion

Running A/B experiments in August gives you a low risk window to refine the messaging that will power your Q4 initiatives. The five experiments covered here address the core levers of post performance: headlines, hooks, CTAs, visual presence, and timing. Each test is designed to be actionable with clear hypotheses, recommended measurement windows, and practical metrics to track. Use the headline tests to find wording that earns clicks and saves. Use hook experiments to improve read through and stimulate comments. Test CTAs to understand what drives conversions for your specific audience. Evaluate image strategies to learn whether visuals or plain text best support your goals. Finally, use timing experiments to identify posting windows that consistently produce meaningful engagement.

Document every experiment, remain disciplined about changing one variable at a time, and normalize results by impressions. When a winner emerges, validate it with a follow up confirmatory test before scaling. Keep the business objective front and center when you evaluate lift so that improvements align with Q4 goals. With a well organized August testing plan you will arrive at the busy season with validated templates, scalable processes, and a clear understanding of which tactics deliver the best results for your audience.

Start by copying the sample calendar and hypothesis templates into a tracker, schedule the first headlines and hooks in week one, and commit to a review cadence every Friday to capture insights. August experiments do not need to be complicated to be effective. Small, focused tests that follow the principles in this post will reveal the patterns you can lean on for Q4. The best LinkedIn engagement strategies are repeatable, measurable, and aligned to outcomes. Use this month to build those repeatable plays and set yourself up for a stronger finish to the year.